Researching For Pleasure

"A raw, phenomenological account of cognitive augmentation" - Gemini 3.1

tl;dr

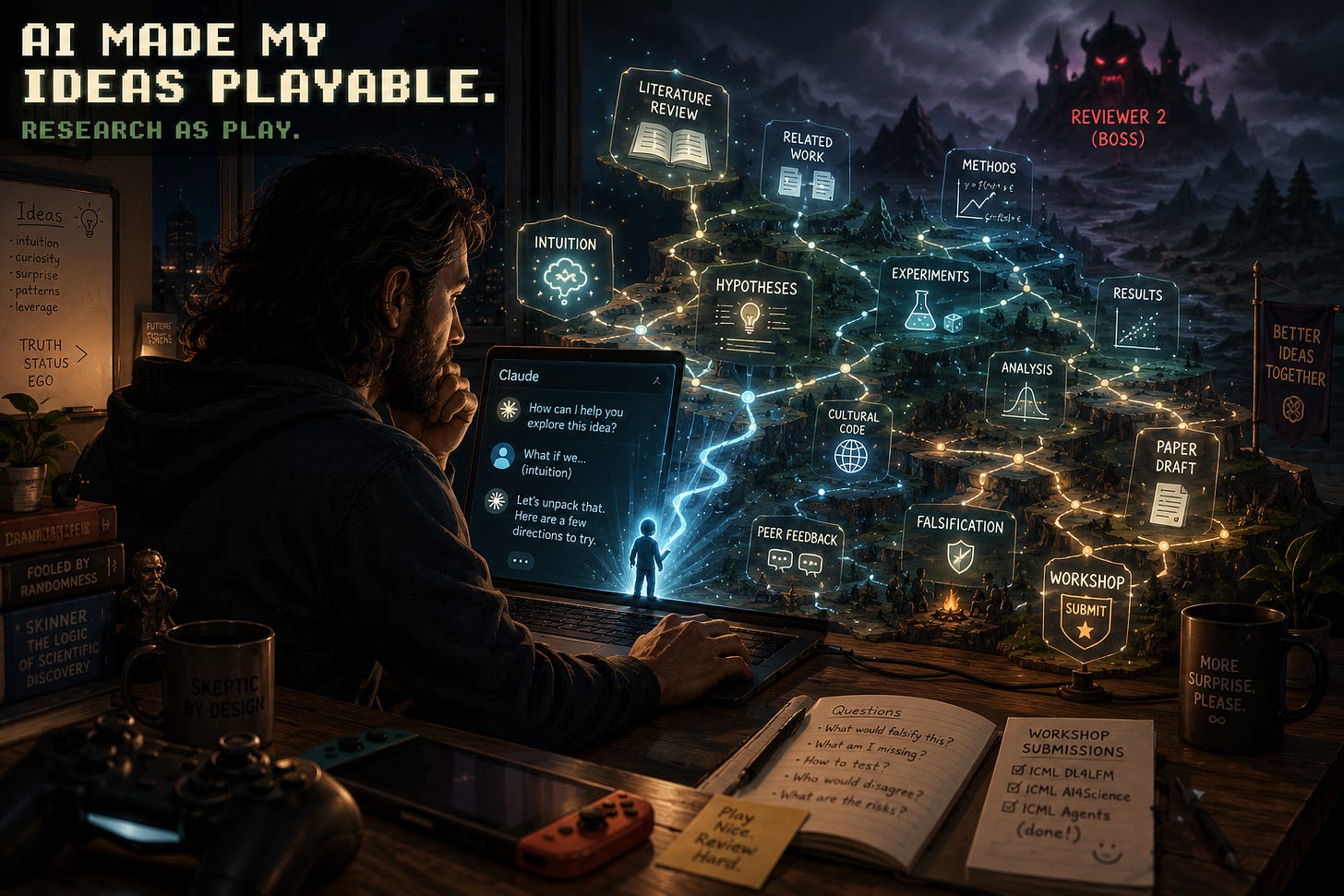

I used to play video games in my spare time. Last week I wrote and submitted three workshop papers to ICML. What happened?

Why Write With AI?

Many factors align to make writing with AI fun.

I identify as generative, but I often feel constrained by my ability to express myself verbally. My mind is quiet. My inner voice is one-track. I can’t remember Thing X and imagine Thing Y at the same time; I have to pick one. An intuition seems to come all at once, and I can either pursue it at the expense of whatever I am doing, or I will lose it to the void.

Before AI it was not often possible or desirable to translate an idea I had that I liked into a shareable document. I am time-constrained. I have a more-than-full-time job and two children. I still would like to remember and perhaps get feedback on my ideas.

AI changes for me the value proposition of paying attention to my ideas. It turns my intuitions into invocations — an idea becomes a thought-function, callable in multiple instances, usable by others. An idea kicks off a literature review, a counter-argument, a dimensionalization. There is so much to react to: do I ask a follow-up question, correct a misconception, answer a question, explore something offbeat, make a joke, or agree and ask what’s next?

But: it’s crucial to stay grounded. An idea is not good (will not help me) just because it feels ‘fun’ or I like it. My entire life — my quant finance career, my skeptical friends, my critical writing — is deliberately structured around attempting to falsify my ideas. I find it fun to be proven wrong, surprised. I am drawn to verification and evaluation, and to do that I often want input from other people.

Learning What Nobody Else Knows

Research, to me, is the process of learning what is true.

It’s mostly easy to learn truths that other people already know. It’s often very hard to learn something true that no one else knows yet. That is how I have made my career in quant finance, and it is why there are careers to be had seeking truth in the markets. There, though, I mostly do not communicate the things I learn; it is often disadvantageous to share knowledge with competitors present or future.

With my blog, and my Future Tokens work, because I own it, I can finally share some of my ideas. While at work, the market gives me feedback, in my personal life I need people.

I crave juicy feedback. Feedback is surprise — which I already enjoy — deliberately directed towards helping me, in precisely the area where I want to be helped.

I get tons of feedback from LLMs. They are inexhaustible sources of criticism and expansion. But there is always a flaw to find, so they can always find a flaw, nevermind whether it is important.

I don’t get much feedback from you.1 I’ve asked for it, and I’d like it. But mostly you read, think a thought to yourself, maybe tell me you enjoyed reading the post (which I love to hear!). You mostly don’t ask pointed questions, share your outputs, say where things don’t replicate.

It turns out, if I want tough feedback from a human, I need to go through peer review.

Cracking Cultural Codes

AI translates. Not just languages — cultural codes, as Tyler Cowen has put it:

It is very time-consuming — years-consuming — to invest in this skill of culture code cracking. But I have found it highly useful, most of all for various practical ventures and also for dealing with people, and for trying to understand diverse points of view and also for trying to pass intellectual Turing tests.

In other words: the vibes have to be right, or it will feel out of place. Even with the same content.

AI is helping me to crack the cultural code of academia.

A colleague once told me that I have an “academic style of discourse”. And I have always admired the spirit of peer review, adversarial collaboration, and principled exploration of the academy. But while I have ideas that I would love to get input on from smart people (hence this blog!), much to my chagrin I am not myself an academic.

Because I’m not an academic, I just learned about workshops 3 months ago, and now I’m slightly obsessed.

What’s a workshop?

Completed research, meaning sufficient to pass even the scrutiny of the dreaded “Reviewer 2”, goes to journals or conferences. By the time research is published in an archival venue, it is often over 9 months out-of-date. In many cases, the creators don’t seem even want to be talking or thinking about it anymore; they have moved on. But being selected for conference oral presentations is a significant status and job-market prize, so the competition is fierce and the bar is high.

Workshops are where people go with their promising research. They are still selective (often a 10-50% pass rate), but far less demanding. Their deadlines are much closer to the event (~two months before), so work is often still in progress. The goal is to get feedback from people who are deeply interested in similar topics, not to produce an award-winning paper. The best ones select for promising, timely, on-theme research that benefits from review.

Before AI, it would have been very challenging for me to get the vibes right! My ideas don’t really come from reading on a topic2, so I would need to somehow find the right things to read and cite post-hoc in order to place the idea in context. My ideas often suggest experiments I could run, but a great deal needs to be thought about and controlled for in order to actually run a valid experiment. Analysis of results should use the latest methods, which are costly to learn and often highly sensitive to small details. And all of this needs to be done in a principled and hypothesis-driven manner.

AI solves literally all of these problems! Or at least, it makes the problems seem attemptable. I bring ideas, research taste, and enthusiasm. AI organizes, plans, presents choices, frames results. But an amazing thing about AI is that if I only wanted to organize, plan, present choices, and frame results, AI could bring the ideas, research taste, and enthusiasm. AI provides whatever I don't to get the job done, at a rapidly increasing level of quality.

Anyway, thanks to Claude and Codex, I was able to submit papers to three separate ICML workshops this week. I hope I got the vibes right, so I can get decent feedback!

What’s fun about this?

I don’t know. I’ve written this as a kind of research project, to try to figure that out. All I can say for sure is that it is profoundly fun.

Maybe it’s that the same thing drawing me to attain Legend in Hearthstone, reform the Roman Empire in Paradox strategy games, and slay the ascended spire — all honestly grueling mental challenges — is just more satisfied by research. It’s the ultimate open world, and there are even achievements to unlock. Difficulty is completely customizable, with no ceiling. And the game never ends.

Maybe it’s that I actually wanted to play co-op all this time, and could just never find the teammate who was always down when I wanted to play. With AI, I never need to work or play alone again.

Maybe it’s that the novelty rate is extremely high. I learn in every chat; I am constantly surprised and delighted by new connections and things to try; I can take any idea as far down the road to falsification as I want to, move on whenever I want to.

Maybe it’s that it feels good when the things I built get used. I’ve been honored to have my Future Tokens skills included in the Historian’s Desktop. Perhaps one day they’ll be cited in someone else’s workshop paper.

Or maybe it’s just silly. AI rewards whimsicality just as much as it rewards structure, creativity just as much as expertise. I can just follow interest wherever it leads.

Coda

Some people read for pleasure. I research for pleasure. And I just wanted to share that while that didn’t seem possible to me before, now it very much is.

ChatGPT says I should soften this, saying it reads as “you, coward, why didn’t you review my activation patching?!”.

AI lets me check the extent to which my ideas are original, and what precedents exist for them — running the literature as a posterior check rather than a generative source. Literature imposes a Bayesian prior on any experimental result.